1. Pull the Nginx image first

docker pull nginx

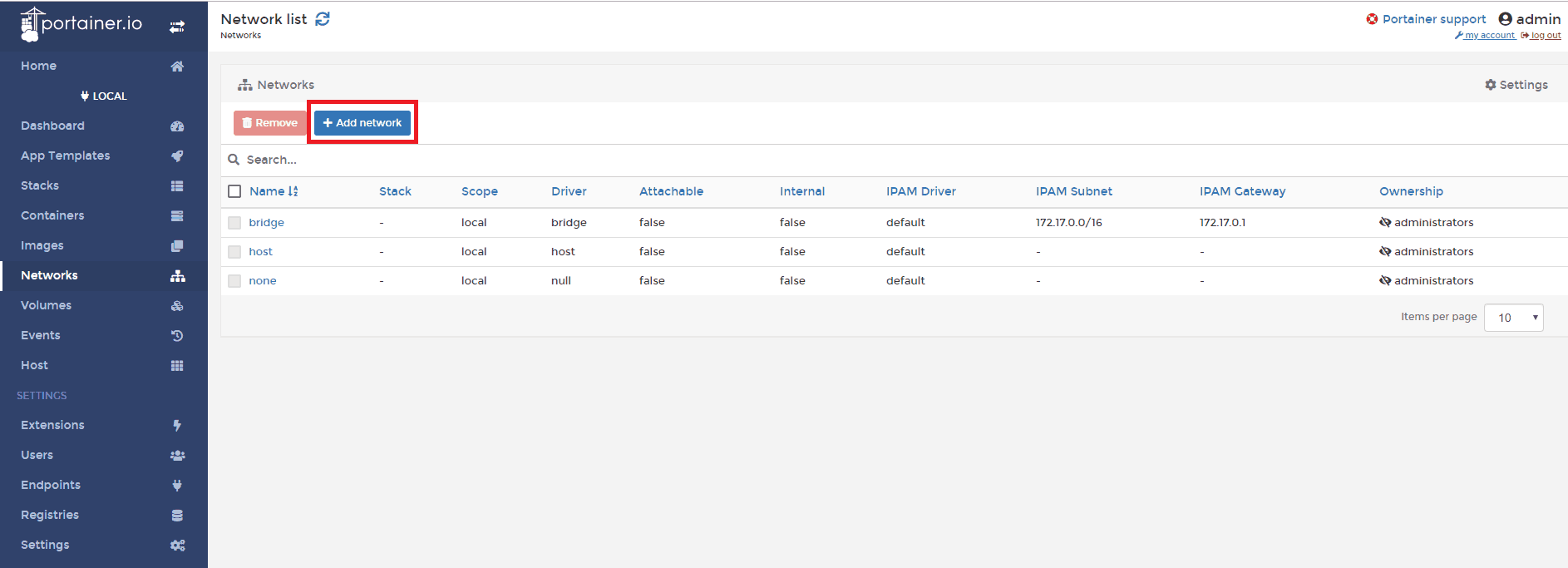

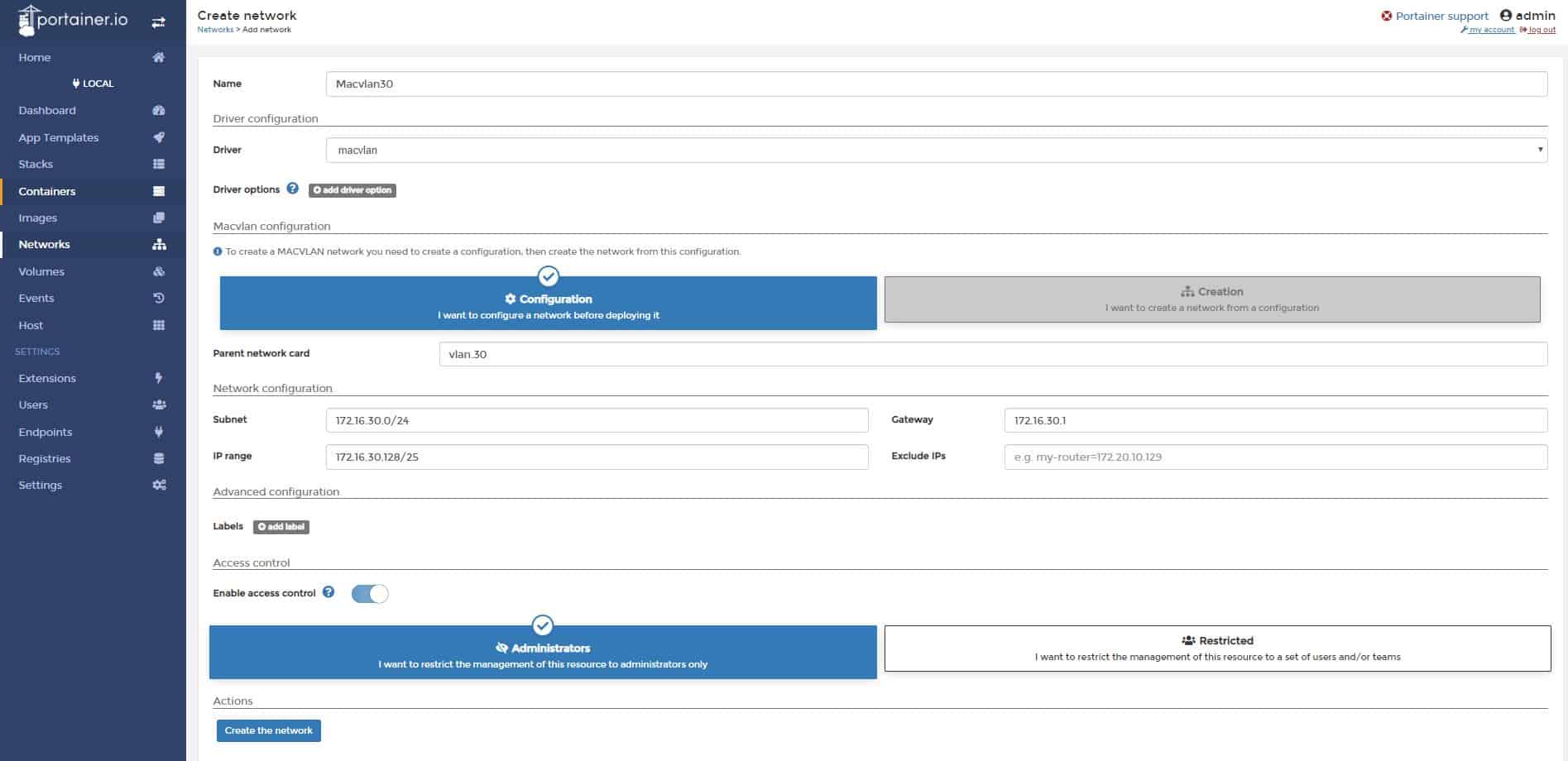

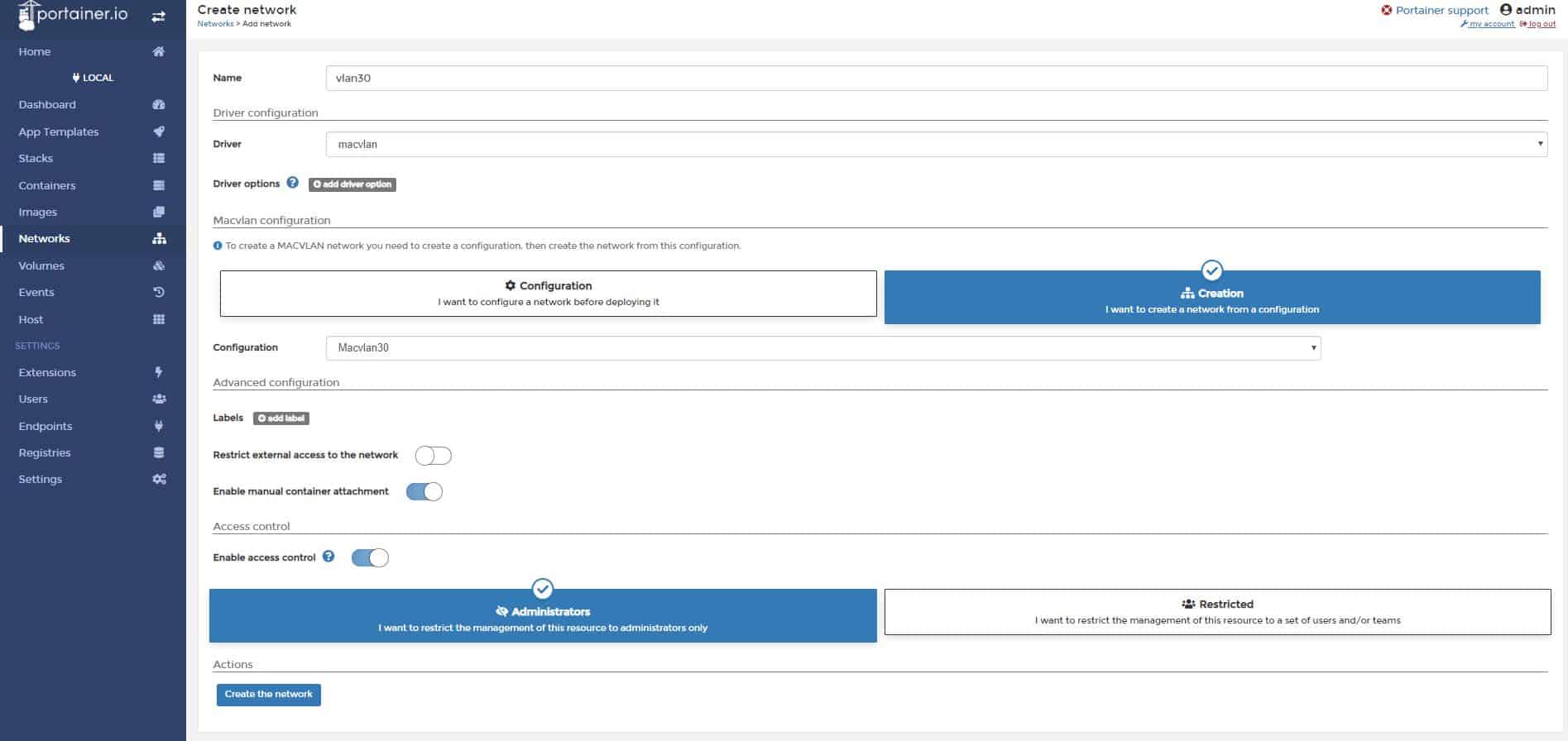

2. Create new Docker network

Use macvlan to create new network, but first check the subnet your docker host is on, we would like to create the same subnet on the docker network. This way, we can communicate directly to containers from our LAN network.

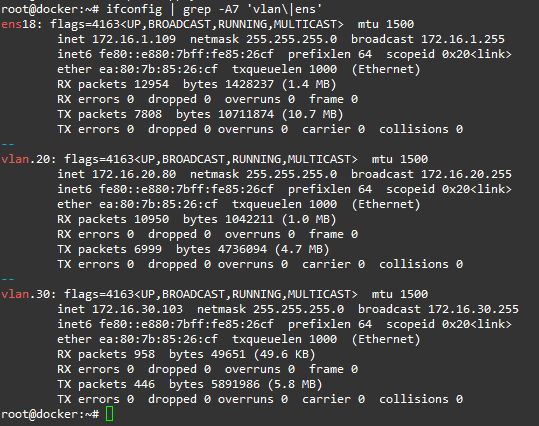

ifconfig or ip add

Find your network, ethX or ensX, in my case it’s ens18 with subnet 172.16.20.0/24

Now, create new network in the same subnet:

docker network create -d macvlan \

--subnet=172.16.20.0/24 \

--gateway=172.16.20.1 \

-o parent=ens18 localLAN

Command explanation:

-d macvlan = macvlan driver

–subnet = subnet of your local LAN

–gateway = your router IP

-o parent = network interface on the host with the same subnet

localLAN = name of the new Docker network, you can customize it

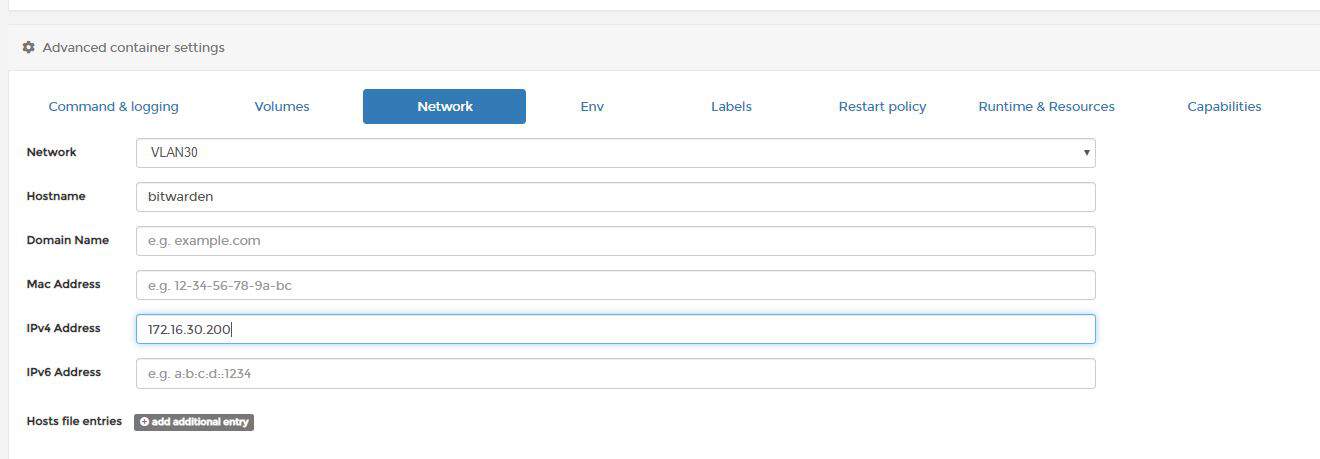

You can now run your container and have direct access to it from LAN.

docker run --net localLAN \

--ip=172.16.20.82 \

--name nginx_test -d nginx

Command explanation:

–net localLAN is the new Docker network we defined earlier

–ip=172.16.20.82, this is the IP you would like to assign to your container, make sure it doesn’t overlap with your LAN network IPs, make sure the IP is available

–name nginx_test, this is the name of your newly created container

-d nginx, detach nginx image (run in a background)

3. Nginx specific

We want to have Nginx configs and web content saved on our docker host, so we are going to mount local volumes to docker container.

First of all, we need to run the default nginx container and pull all the default config and web files to our local docker host.

docker run --net localLAN \

--ip=172.16.20.82 \

--name nginx_test -d nginx

If you forget the IP of the container, you can check it with the following command:

docker inspect -f '{{range .NetworkSettings.Networks}}{{.IPAddress}}{{end}}' container_name

Create nginx folders on the docker host:

mkdir -p /etc/nginx /var/www

Copy default config files from container to the docker host:

docker cp nginx_test:/etc/nginx/ /etc/

Stop and delete the running container:

docker stop nginx_test

docker rm nginx_test

Star container with a mounted volumes:

docker run --net localLAN --ip=172.16.20.82 --name nginx_test \

-v /var/www:/usr/share/nginx/html \

-v /etc/nginx:/etc/nginx \

-d nginx

Go to /var/www on your docker host and create a file index.html with this contents:

<html>

<header><title>This is title</title></header>

<body>

Hello world

</body>

</html>

Your website should be displayed now at http://172.16.20.82.

You can change the content of the website live, without reloading container. Content folder is on the Docker host machine at /var/www/

If your container crashed for some reason, try running it in interactive mode, it should display the error:

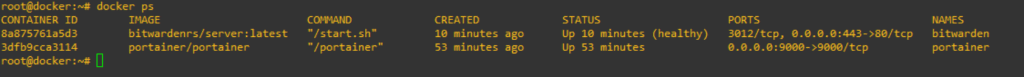

First, check if container is running:

docker ps

If container is down, run it interactively to display errors:

docker start nginx_test -i

Or use docker logs:

docker logs nginx_test